|

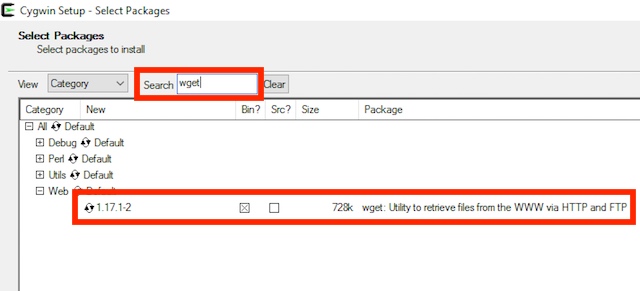

$ wget -r -np -k -random-wait -e robots=off -user-agent "Mozilla/5.0" 'target-url-here'Īnd if third-party content is to be included in the download, -H switch can be used alongside -r to recurse to linked hosts. Wget also provides options for bypassing download-prevention mechanisms. $ wget -r -np -p -E -k -K 'target-url-here' In case of a dynamic website, some additional options for conversion into static HTML are available. Wget can archive a complete website whilst preserving the correct link destinations by changing absolute links to relative links. Needless to say, just from the simplest usage, you can probably see a few ways of utilising this for some automated downloading if that's what you want. First released back in 1996, this application is still one of the best download managers on the planet. When you already know the URL of a file to download, this can be much faster than the usual routine downloading it on your browser and moving it to the correct directory manually. Newer isn’t always better, and the wget command is proof. One of the most basic and common use cases for Wget is to download a file from the internet. This section explains some of the use case scenarios for Wget. Make sure that only root can read this file with chmod 600 /etc/nf. Warning: Be aware that storing passwords in plain text is not safe. XferCommand = /usr/bin/wget -proxy-user "domain\user" -proxy-password="password" -passive-ftp -q -show-progress -c -O %o %u To have pacman automatically use Wget and a proxy with authentication, place the Wget command into /etc/nf, in the section: Proxies that use HTML authentication forms are not covered. $ wget -proxy-user "DOMAIN\USER" -proxy-password "PASSWORD" URL Wget uses the standard proxy environment variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed